StereoKit Sample Code

Here are a list of small demos that illustrate how certain parts of StereoKit works!

Asset Enumeration

If you need to take a peek at what’s currently loaded, StereoKit has a couple tools in the Assets class!

This demo is just a quick illustration of how to enumerate through your Assets.

Controllers

While StereoKit prioritizes hand input, sometimes a controller has more precision! StereoKit provides access to any controllers via the Input.Controller function. This is a debug visualization of the controller data provided there.

StereoKit will simulate hands if only controllers are present, but it will not simulate controllers if only hands are present.

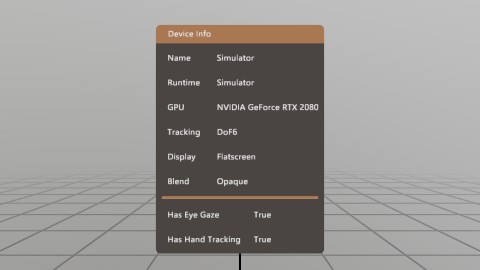

Device

The Device class contains a number of interesting bits of data about the device it’s running on! Most of this is just information, but there’s a few properties that can also be modified.

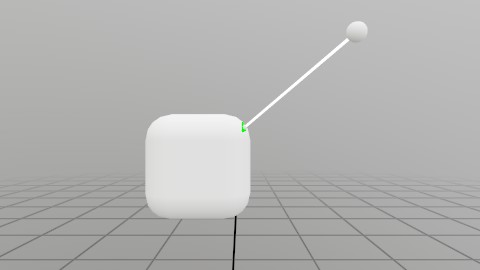

Eye Tracking

If the hardware supports it, and permissions are granted, eye tracking is as simple as grabbing Input.Eyes!

This scene is raycasting your eye ray at the indicated plane, and the dot’s red/green color indicates eye tracking availability! On flatscreen you can simulate eye tracking with Alt+Mouse.

![]()

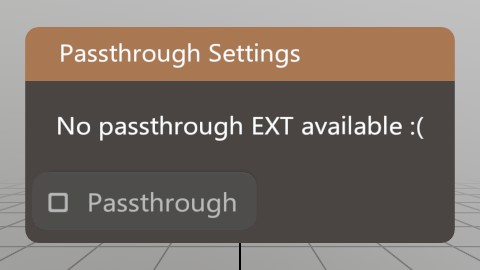

FB Passthrough Extension

Passthrough AR!

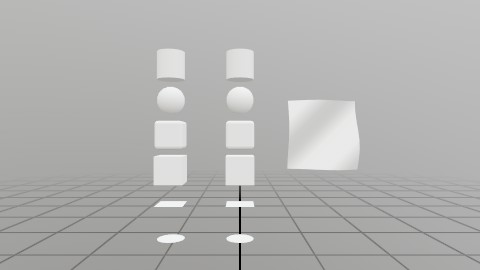

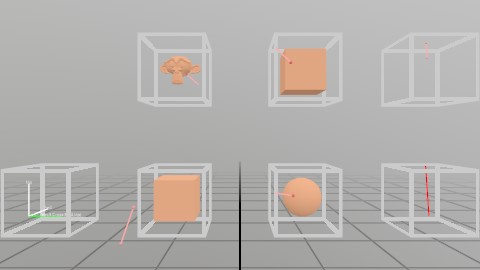

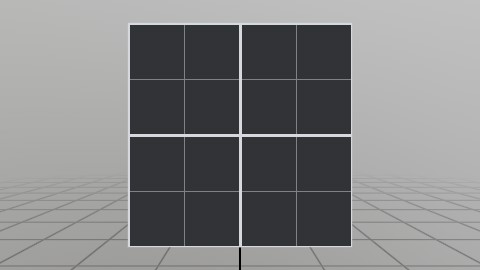

Mesh Generation

Generating a Mesh or Model via code can be an important task, and StereoKit provides a number of tools to make this pretty easy! In addition to the Default meshes, you can also generate a number of shapes, seen here. (See the Mesh.Gen functions)

If the provided shapes aren’t enough, it’s also pretty easy to procedurally assemble a mesh of your own from vertices and indices! That’s the wavy surface all the way to the right.

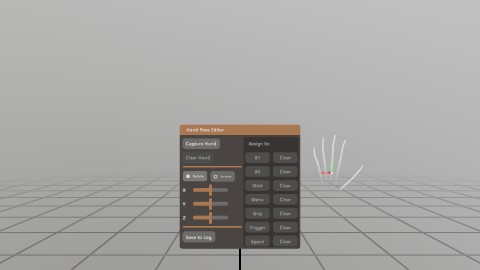

Hand Sim Poses

StereoKit simulates hand joints for controllers and mice, but sometimes you really just need to test a funky gesture!

The Input.HandSimPose functions allow you to customize how StereoKit simulates these hand poses, and this scene is a small tool to help you with capturing poses for these functions!

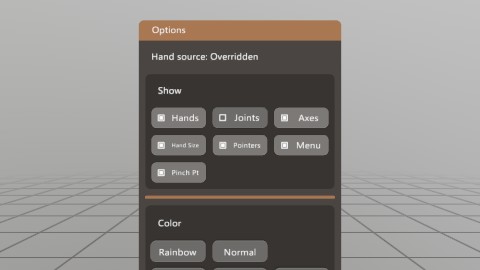

Hand Input

StereoKit uses a hands first approach to user input! Even when hand-sensors aren’t available, hand data is simulated instead using existing devices. Check out Input.Hand for all the cool data you get!

This demo is the source for the ‘Using Hands’ guide, and is a collection of different options and examples of how to get, use, and visualize Hand data.

Line Render

Lines

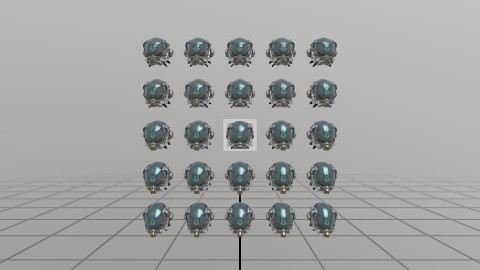

Many Objects

……

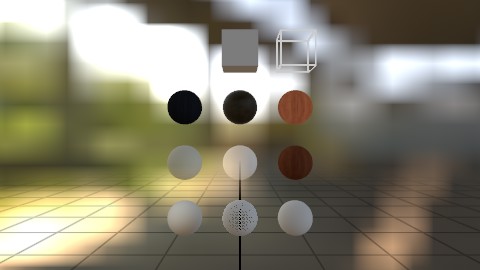

Materials

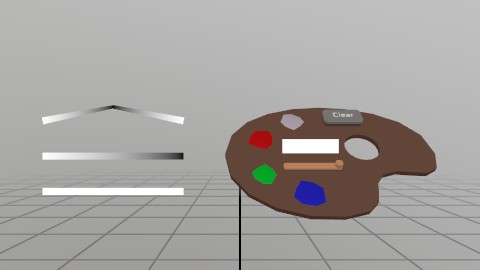

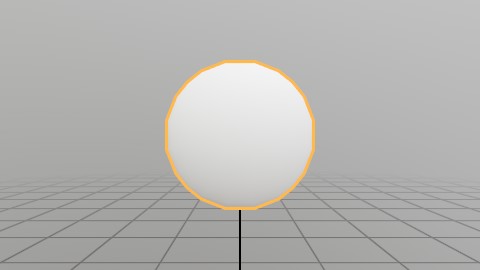

Material Chain

Materials can be chained together to create a multi-pass material! What you’re seeing in this sample is an ‘Inverted Shell’ outline, a two-pass effect where a second render pass is scaled along the normals and flipped inside-out.

Math

StereoKit has a SIMD optimized math library that provides a wide array of high-level math functions, shapes, and intersection formulas!

In C#, math types are backed by System.Numerics for easy interop with code from the rest of the C# ecosystem.

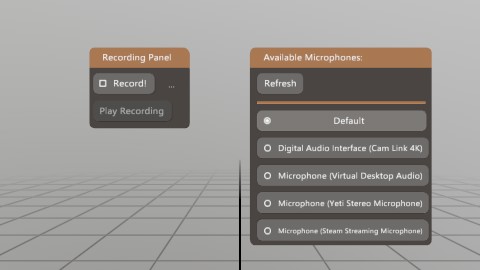

Microphone

Sometimes you need direct access to the microphone data! Maybe for a special effect, or maybe you just need to stream it to someone else. Well, there’s an easy API for that!

This demo shows how to grab input from the microphone, and use it to drive an indicator that tells users that you’re listening!

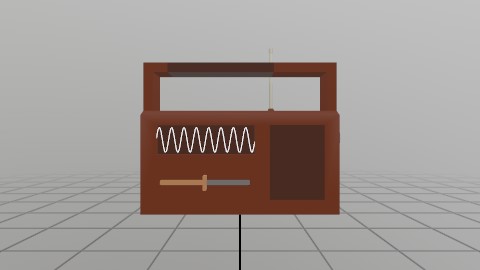

Model Nodes

ModelNode API lets…

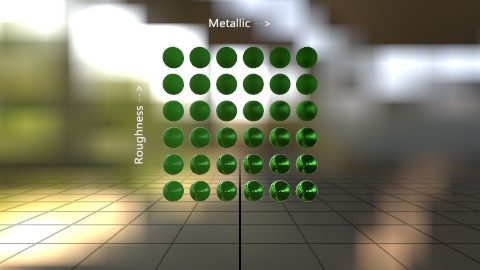

PBR Shaders

Shaders!

File Picker

Applications need to save and load files at runtime! StereoKit has a cross-platform, MR compatible file picker built in, Platform.FilePicker.

On systems/conditions where a native file picker is available, that’s what you’ll get! Otherwise, StereoKit will fall back to a custom picker built with StereoKit’s UI.

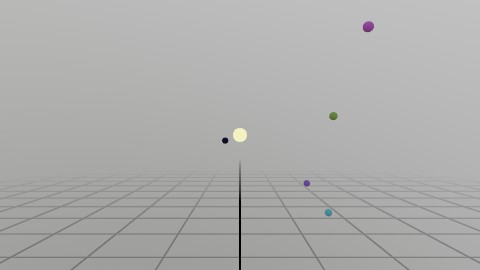

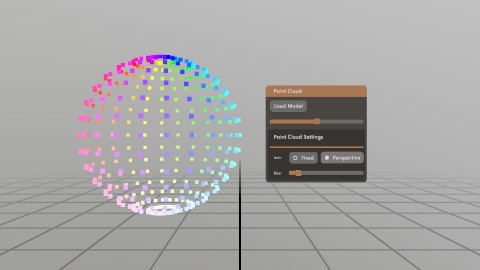

Point Clouds

Point clouds are not a built-in feature of StereoKit, but it’s not hard to do this yourself! Check out the code for this demo for a class that’ll help you do this directly from data, or from a Model.

Ray to Mesh

Record Mic

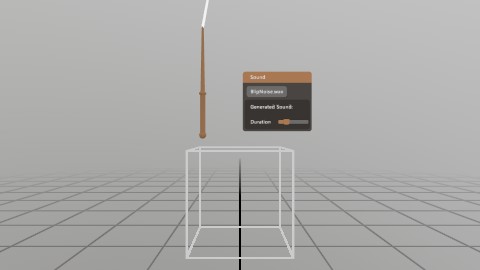

A common use case for the microphone would be to record a snippet of audio! This demo shows reading data from the Microphone, and using that to create a sound for playback.

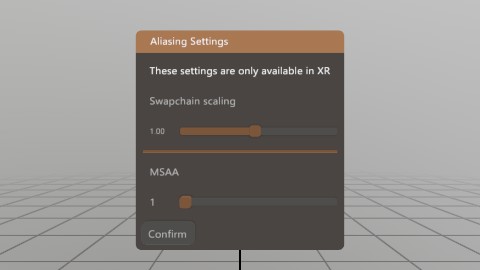

Render Scaling

Sometimes you need to boost the number of pixels your app renders, to reduce jaggies! Renderer.Scaling and Renderer.Multisample let you increase the size of the draw surface, and multisample each pixel.

This is powerful stuff, so use it sparingly!

Skeleton Estimation

With knowledge about where the head and hands are, you can make a decent guess about where some other parts of the body are! The StereoKit repository contains an AvatarSkeleton IStepper to show a basic example of how something like this can be done.

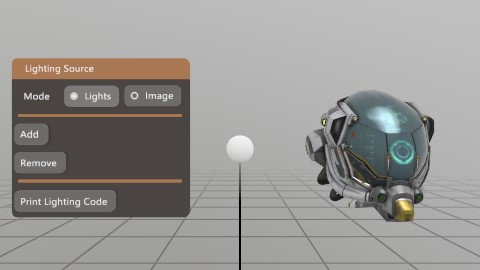

Sky Editor

Sound

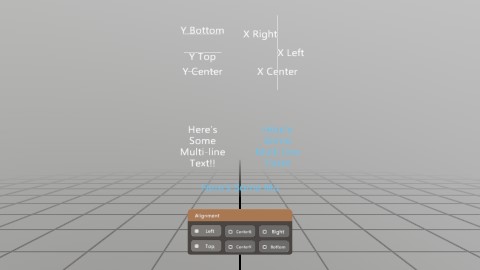

Text

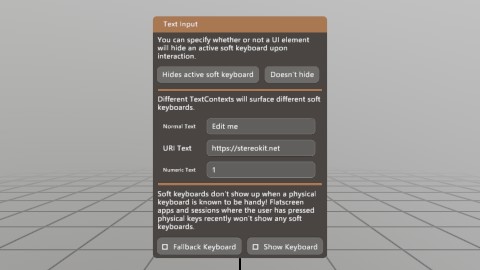

Text Input

Procedural Textures

Here’s a quick sample of procedurally assembling a texture!

UI

…

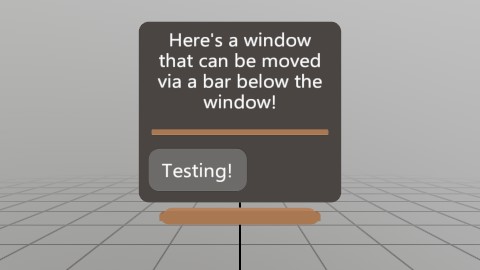

UI Grab Bar

This is an example of using external handles to manipulate a Window’s pose! Since you own the Pose data, you can do whatever you want with it! The grab bar below the window is a common sight to see in recent XR UI, so here’s one way to replicate that with SK’s API.

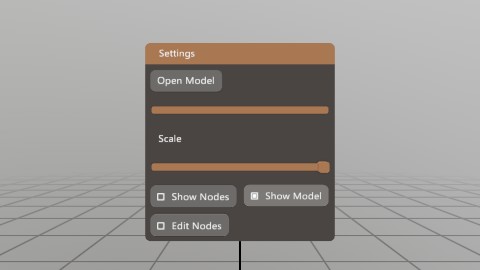

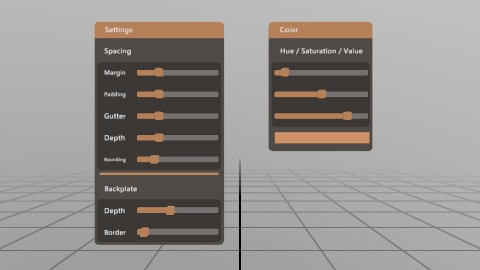

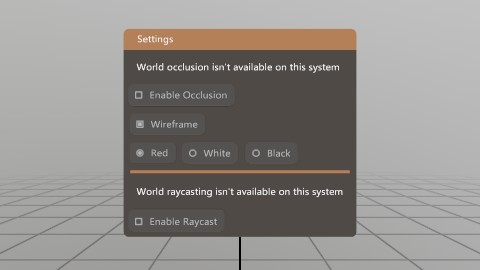

UI Settings

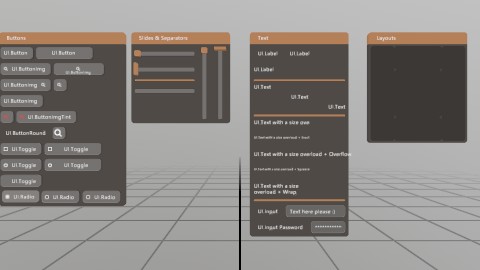

UI Tearsheet

An enumeration of all the different types of UI elements!

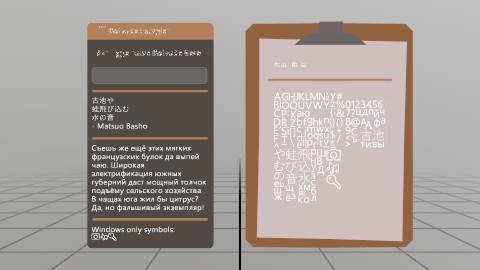

Unicode Text

World Anchor

This demo uses UWP’s Spatial APIs to add, remove, and load World Anchors that are locked to local physical locations. These can be used for persisting locations across sessions, or increasing the stability of your experiences!

World Mesh

Found an issue with these docs, or have some additional questions? Create an Issue on Github!